Teaser

Here is the current teaser for the first and only analog photo lab/darkroom simulator for Virtual Reality (as far as I know.)

Overview

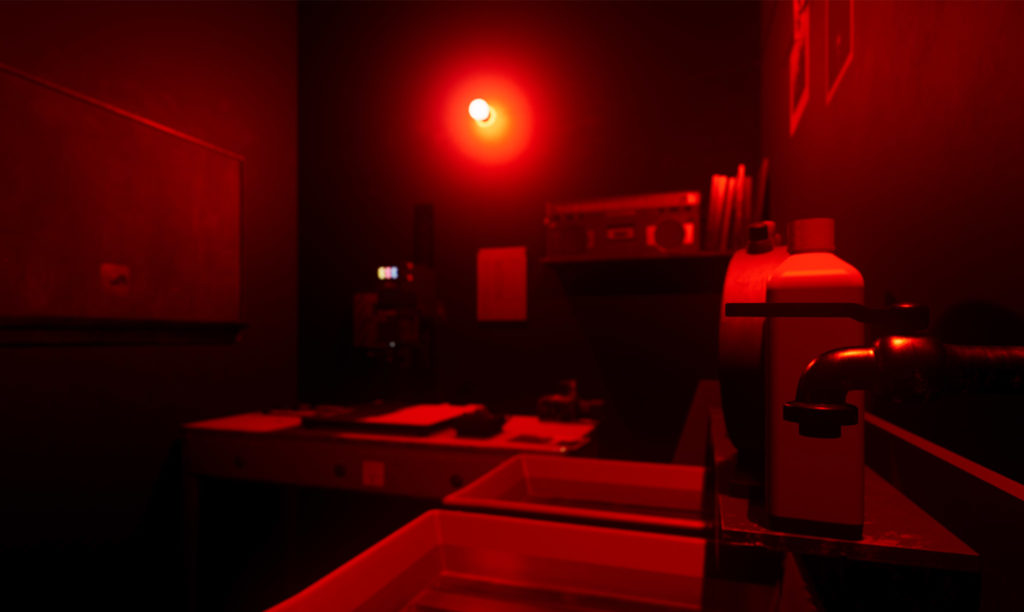

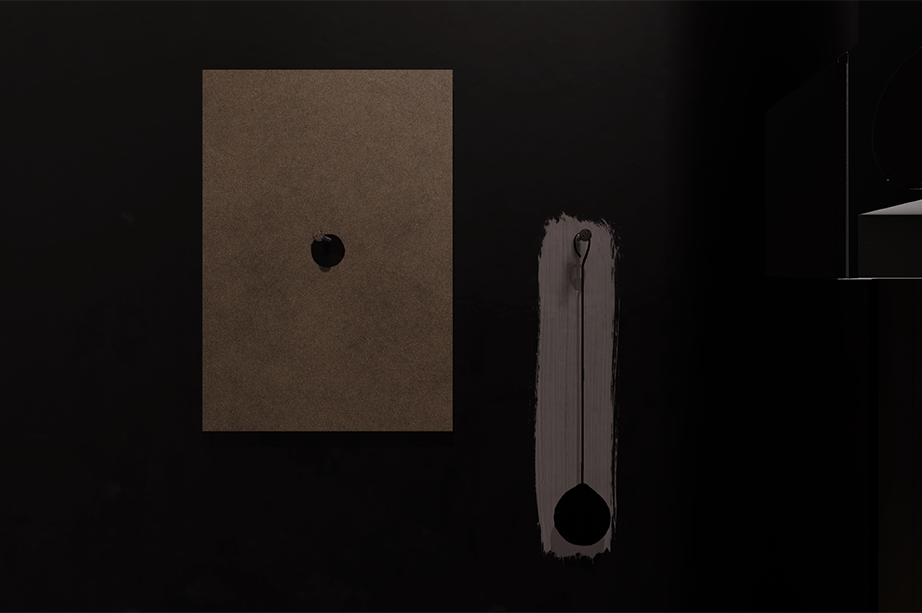

Here are a few more images what it currently looks like. I will keep this updated whenever I make bigger changes.

So how does this all work?

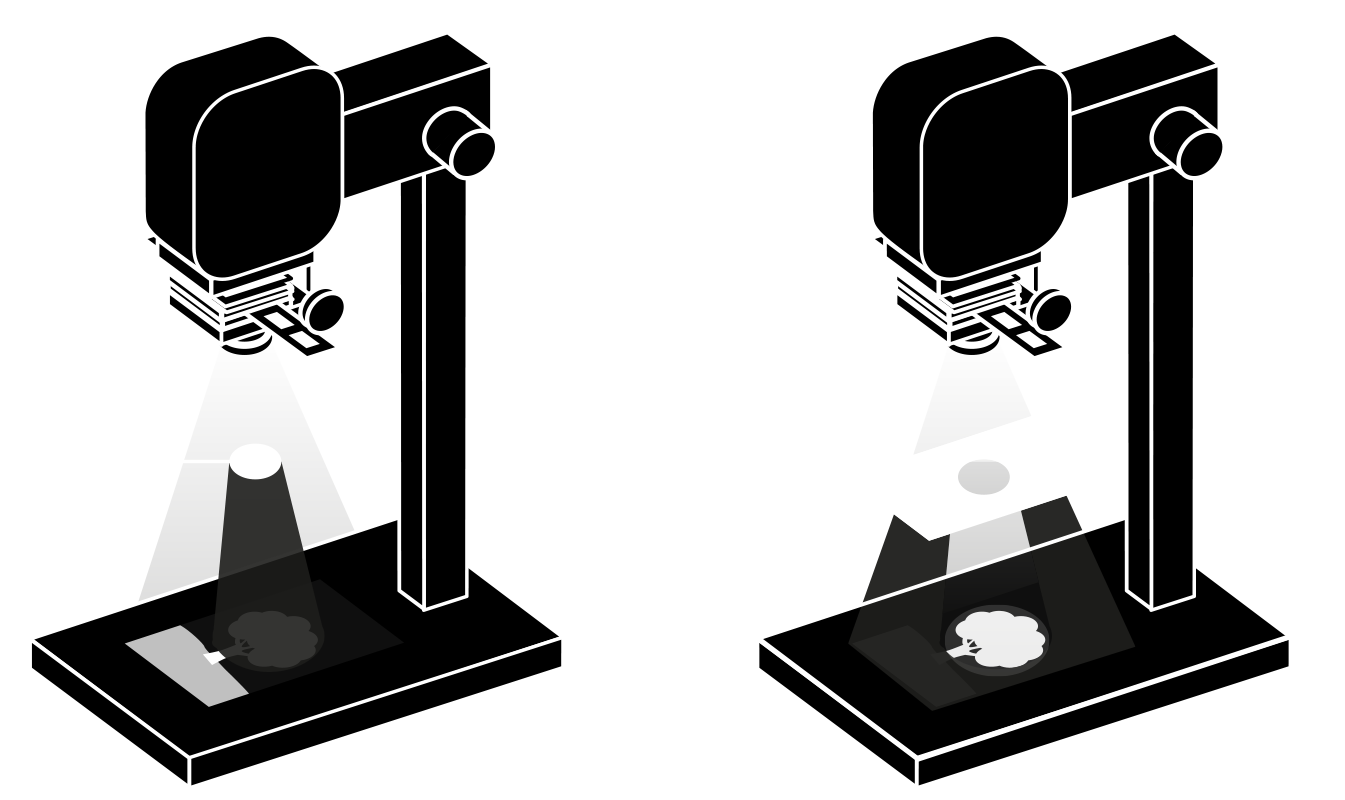

These are the rough steps how to print a black and white picture in the darkroom. I left out some more detailled steps such as printing test strips to determine the right exposure and dodge & burning which is explained in the next chapter, but this should give you a good understanding how this all works. Not so hard to be honest – just takes a bit of practice and love for the medium.

Dodge & Burning

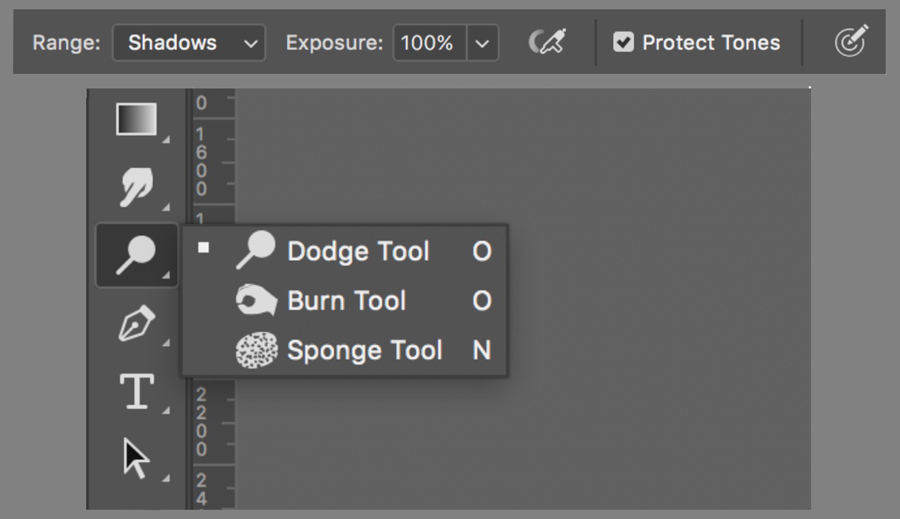

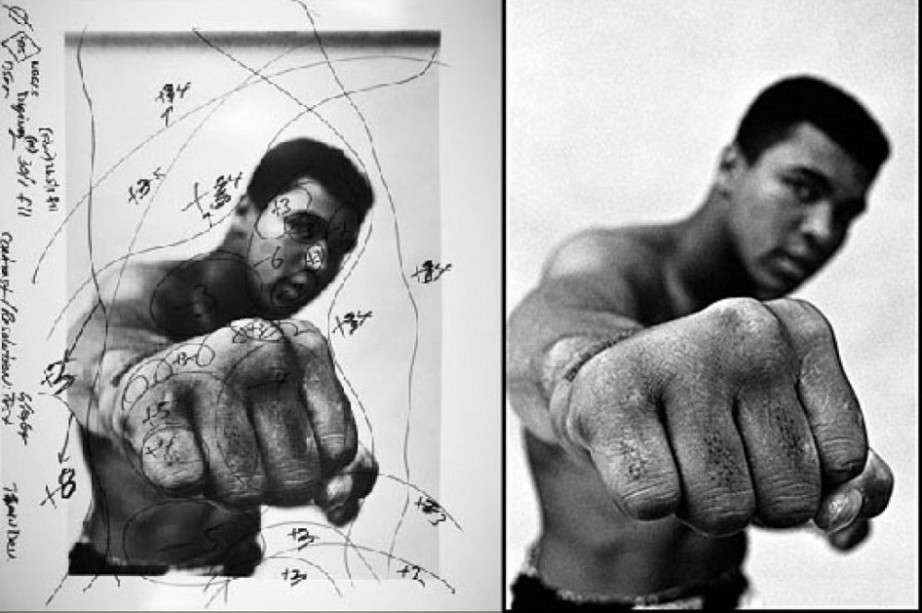

If you use Photoshop, you might be familiar with the dodge and burn tools to selectively brighten or darken areas in your image. Those tools – as many others – originate form the analog darkroom.

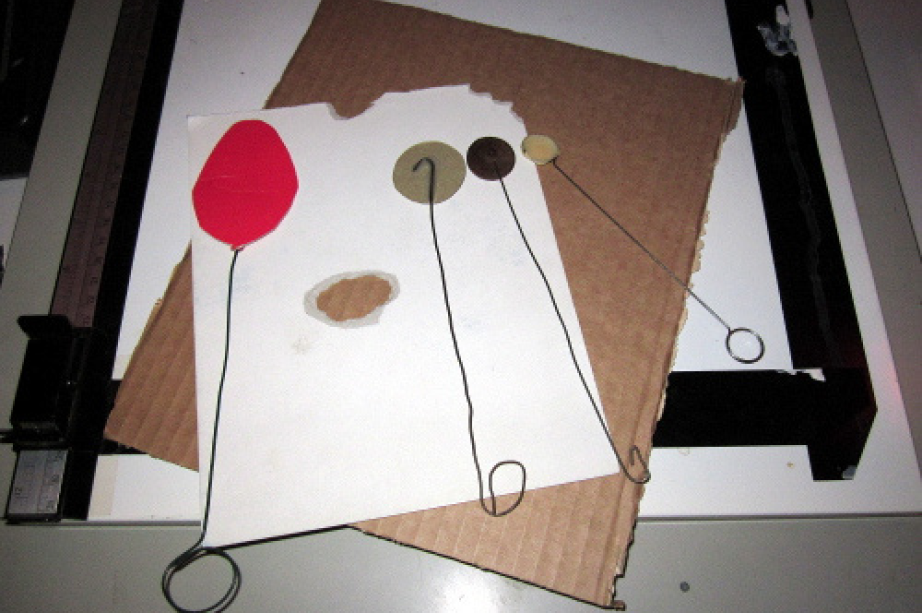

You use them to selectively block the light (dodging) from the enlarger head in a certain area to reduce the darkening (= make it brighter) or add more light to areas to enhance the reaction of the silver particles (= make it brighter). Photoshop uses a hand icon but many analog printers tend to use a cardboard with a hole in the middle to achieve the same result as it allows you to see the image projected on the cardboard while burning.

You might be familiar with images like the one from Muhammad Ali (© by Thomas Hoepker/Magnum) with lots of numbers scribbled on them to mark certain areas that need additional burning (e.g., +3 seconds on his temple = darker/more details) or areas that need to be dodged (e.g., -5 seconds on his stomach = brighter).

Contrast Adjustment

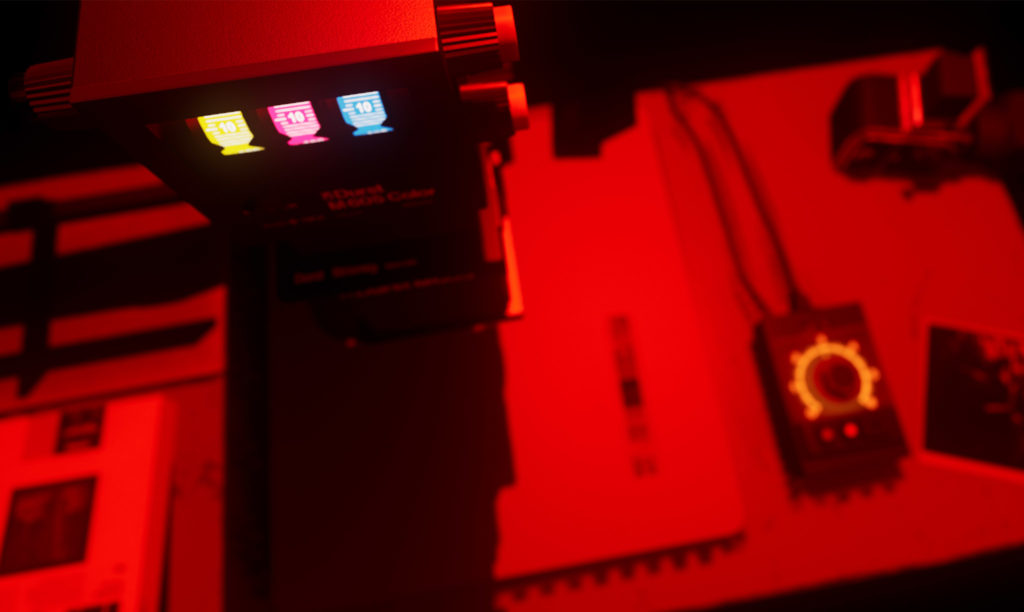

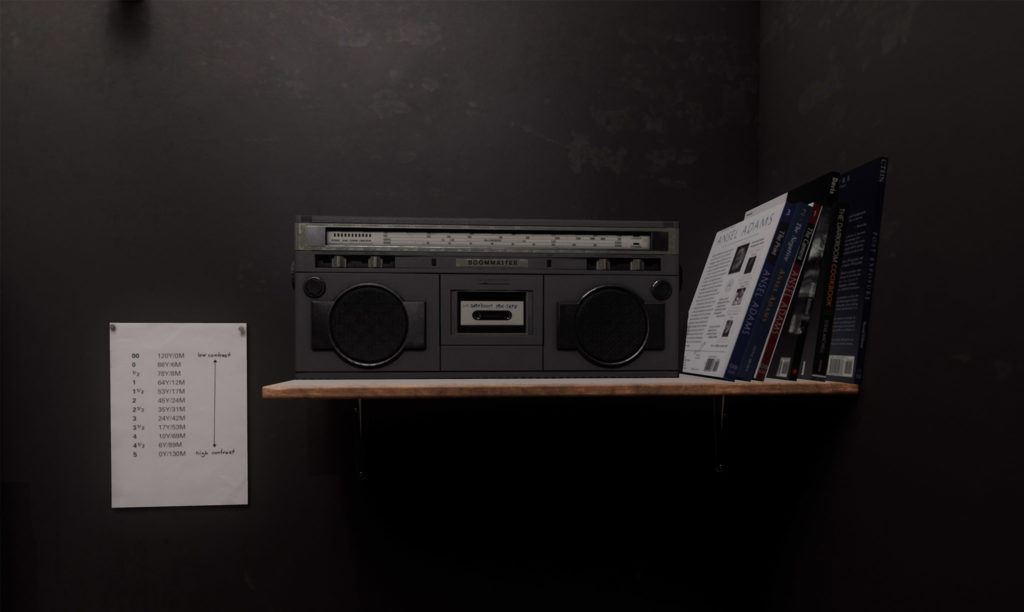

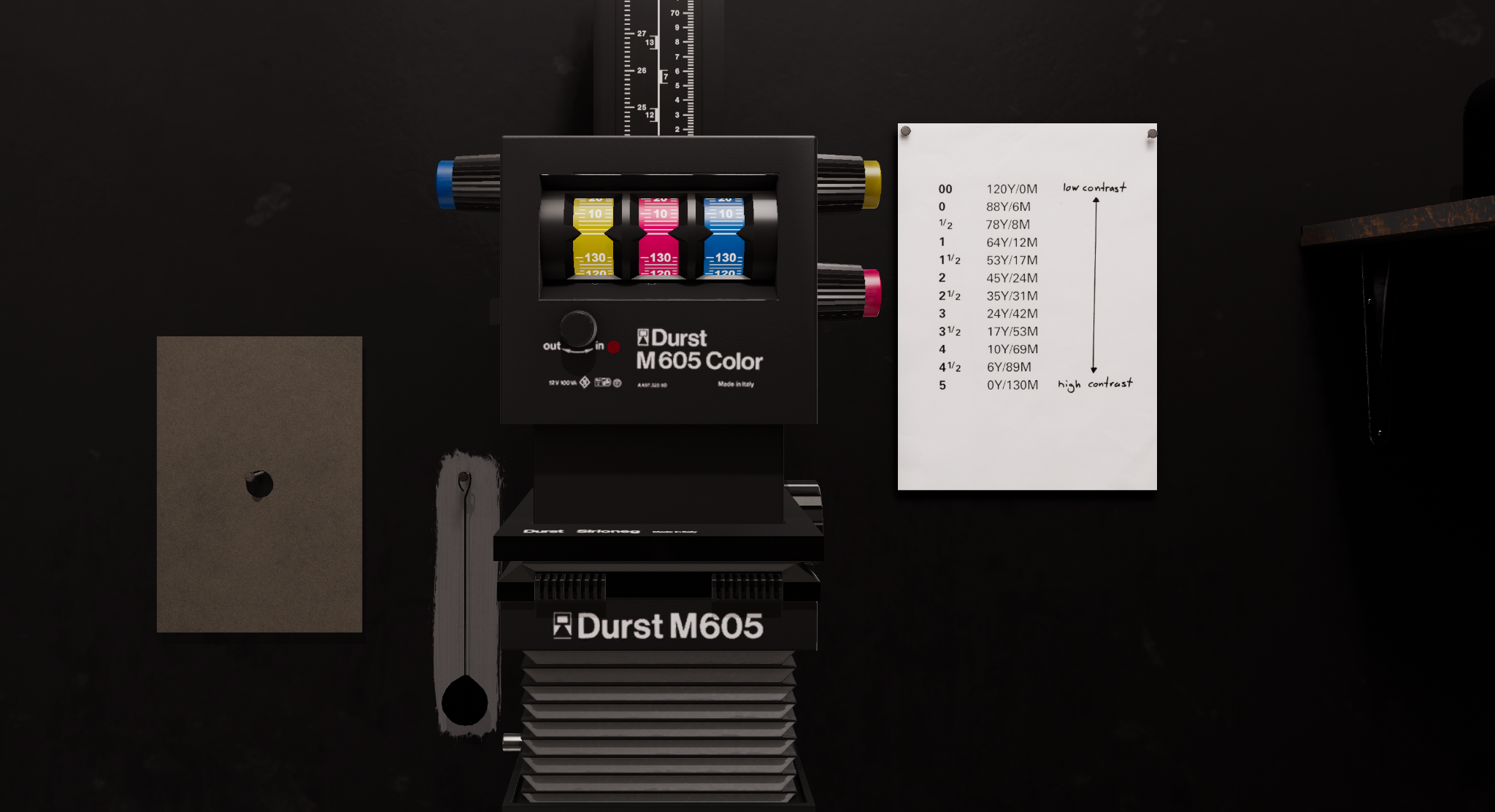

In a traditional darkroom, the paper determines the contrast of the resulting image. There are many different variations with fixed gradations ranging from low contrast to very high contrast images.

What I usually prefer are multigrade papers that have a variable contrast so I can adjust the contrast during the print session by adjusting the color filter dials on the enlarger head.

The paper contains multiple layers of the light sensitive coating that react differently to a specific color spectrum of light and makes it easy to dial in the contrast you like for the image and even mix different contrast levels for specific areas of the image (read more on split grade printing in the excellent ebook on this topic by Tim Layton).

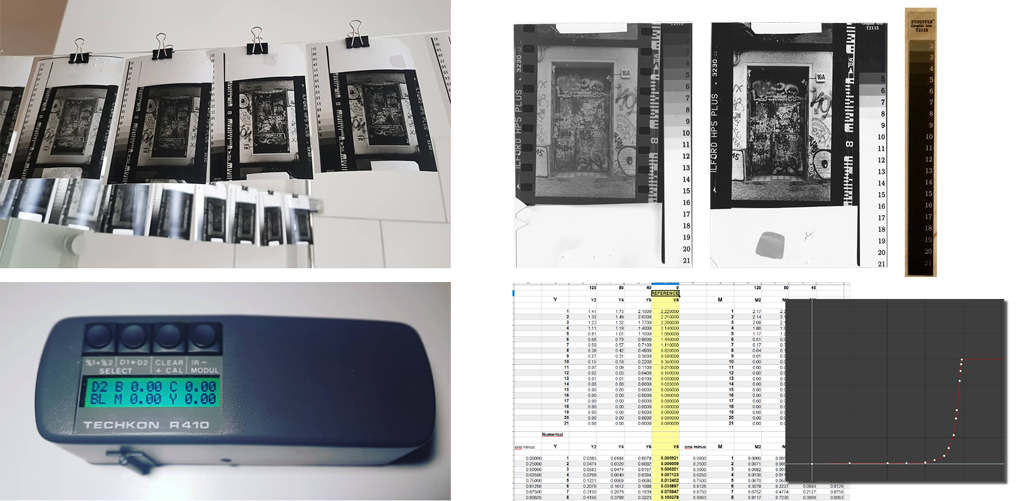

To do this, I bought a 21-Stouffer Step Wedge with standardized transparency values, printed a lot of test charts with different light settings and measured the resulting prints with a proper densitometer and used those values in Unreal.

Blur & Defocus

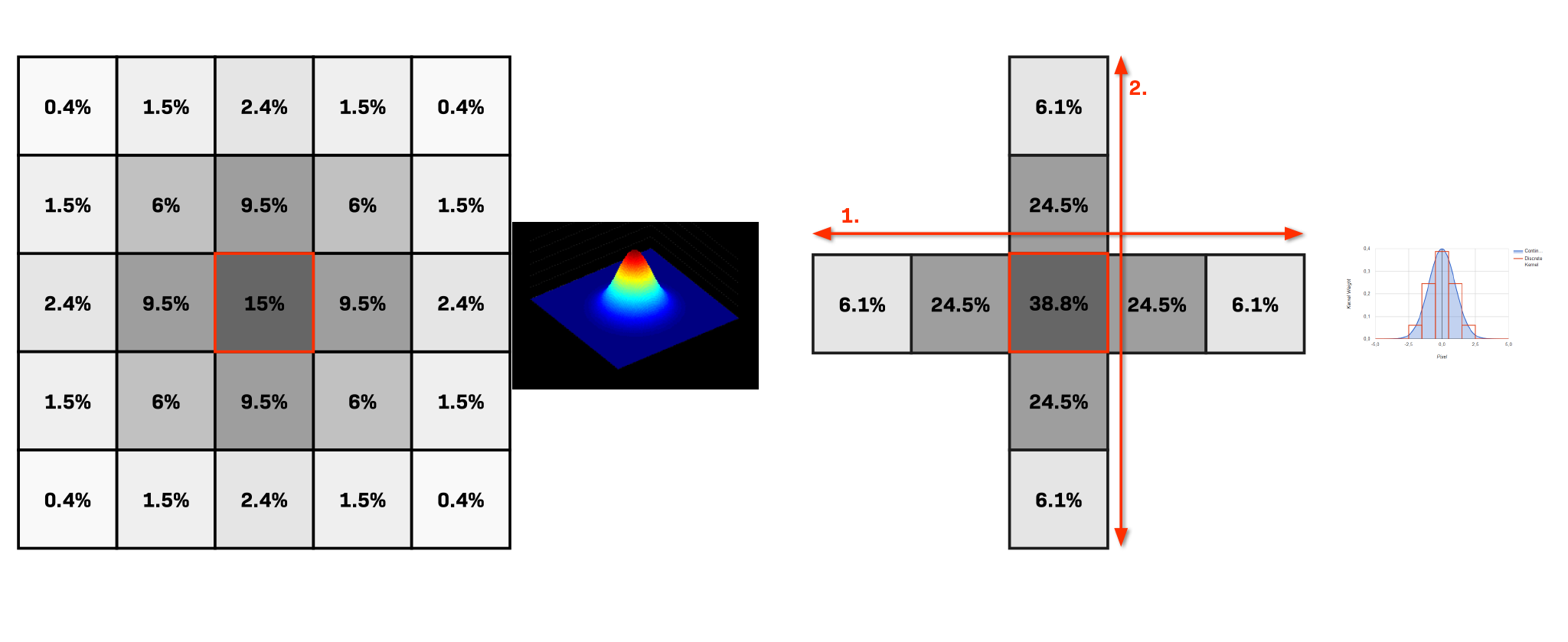

Coming from a Visual Effects background, I was aware, that a realtime blur for VR at 90 frames per second could be quite challenging, so I spend a fair amount of R&D time into finding the best and fastest blur method to accurately simulate this in the app.

I ended up using a 2-pass gaussian blur estimation, that scales nicely and was integrated with HLSL into Unreal.

To avoid the need for constant evaluation on tick, the blur is only calculated when the focus is adjusted or the head of the enlarger moved and then written to texture.

Fluids & Chemicals

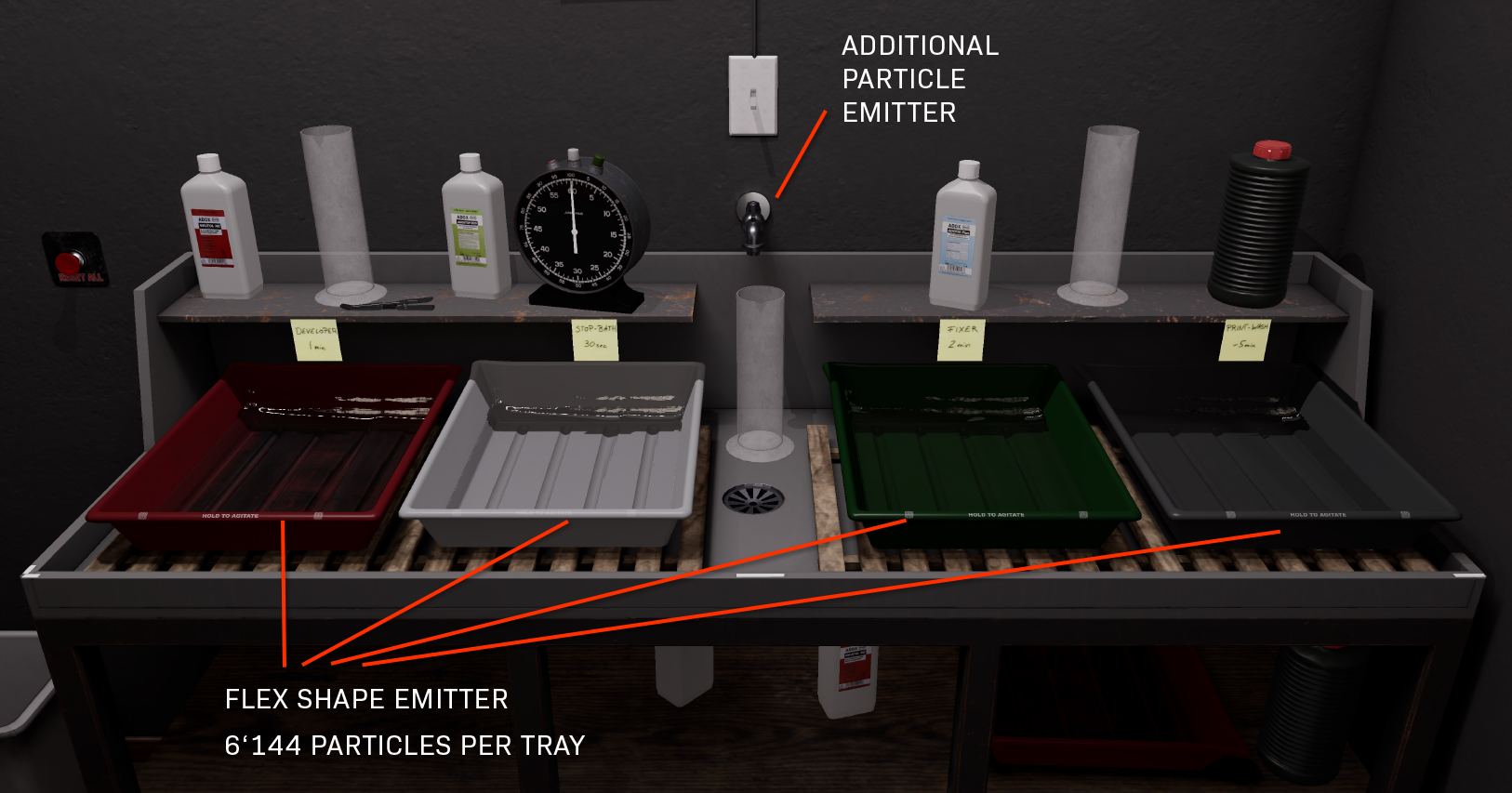

While I am not quite done exploring this area yet, I am happy to have something, that feels like realistic fluids. When I started with analog darkroom work, this was what I was mostly looking forward to do: play with chemicals and fluids! – so it had to be possible to splash around in VR too. I looked around for alternative ways to do it, but the Nvidia Flex physics system blew everything else out of the water.

I found this custom gameworks branch for Unreal provided by 0lento on Github that I use at the moment. I will still spend some more time on making the fluids as cool looking as possible and integrate a chemical mixing system soonish that I have been working on recently.